AI Moves into the Control Loop – ABB Integrates Deep Learning Vision with Machine Automation

ABB has announced what it describes as a first for industrial automation: the seamless integration of AI-based machine vision directly into the machine control system. By embedding deep learning capabilities into its automation architecture, the company, through its machine and factory automation division B&R, has enabled vision data to feed advanced, real-time machine control loops.

For metrology and quality professionals, the development signals a significant shift. Machine vision is no longer a peripheral inspection tool; it is becoming an active participant in motion control, robotics and CNC-driven processes.

From “Flying Blind” to Closed-Loop Intelligence

As production processes increasingly rely on real-time feedback from imaging-based inspections, machine vision has grown in importance. Yet historically, its full potential has been constrained by limited integration into core control architectures.

In many systems, vision and control have effectively operated in parallel universes. Integrating a camera system into a machine that is already managing safety functions, motion control, robotics and CNC has often been complex and resource-intensive. As a result, machines have continued to ‘fly blind,’ with inspection results influencing downstream decisions rather than real-time machine behavior.

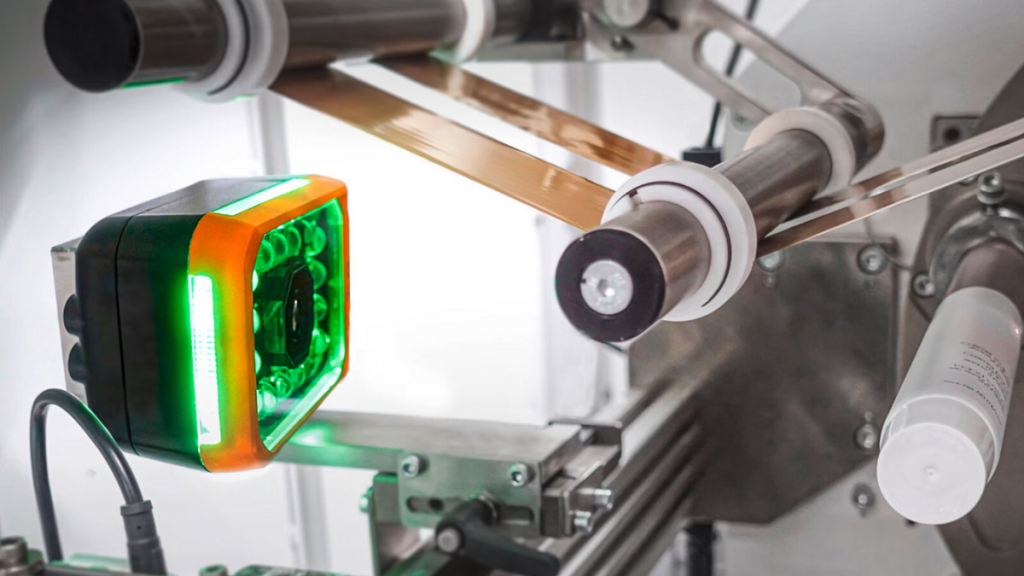

B&R’s latest AI-enabled Smart Camera portfolio changes that equation by bringing deep learning and rules-based vision directly into the control loop.

The new AI-based Smart Cameras integrate edge AI capabilities, allowing real-time vision processing and rules-based inspection without interrupting production. For the first time, machine vision technology is embedded seamlessly into an automation system—replacing standalone vision sensors and PC-based processing with edge-driven applications.

This level of integration opens possibilities well beyond traditional quality inspection. Vision information can now be fed directly into control loops in real time, enabling cameras to synchronize with axis movements at microsecond precision. All hardware components require only a single cable, with optional hybrid connections enabling daisy-chain configurations.

The AI Smart Camera supports a comprehensive suite of AI-based functions, including:

- Anomaly detection

- Optical character recognition (OCR)

- Object detection and classification

These AI tools can be combined with deterministic, rules-based algorithms. The hybrid approach balances the adaptability of AI with the speed and repeatability of conventional vision systems. Complex inspection tasks—such as product type identification, subtle defect detection and printed code verification—can now be executed in a single pass using a single device.

Machine vision performance is fundamentally dependent on lighting. To address this, B&R has introduced a factory-calibrated lighting system that improves imaging repeatability by a factor of ten or more. The result is higher-quality input data for deep-learning models, fewer false positives and more stable long-term performance.

Lighting options include integrated illumination within the camera as well as external synchronized lighting modules. A dedicated Flash Controller ensures exact synchronization between light pulses and motion.

The rugged design supports decentralized mounting without performance compromise, while integrated strobe control eliminates the need for additional hardware. Automatic lighting modulation mitigates stray light and supports bright-field and dark-field illumination strategies.

Cost savings are achieved through single-wire connectivity, reduced cabling, elimination of external trigger sensors and simplified daisy-chain wiring.

High-Speed Synchronization Without External Encoders

A major technical breakthrough lies in sub-microsecond synchronization between vision, motion control and robotics. B&R’s patented fieldbus integration connects vision directly to the control loop, enabling tight coordination with motion axes, robotics and HMI communication.

Trigger signals can now originate directly from the controller or motion application. In high-speed, dynamically changing production environments, this eliminates the need for separate camera encoders.

A newly developed just-in-time (JIT) compiler generates executable machine code when the application loads, rather than interpreting it during runtime. Combined with a quad-core processor, this reduces processing time for measurement tasks by up to 75%—without requiring expensive dedicated PCs. The system can seamlessly switch between AI models during operation.

mapp Vision: Integrated Engineering Framework

Central to the platform is B&R’s mapp Vision technology framework, fully integrated into the company’s automation ecosystem. mapp Vision combines hardware components and software tools within B&R’s Automation Runtime and Automation Studio engineering environment.

Using mapp Vision:

- Control programmers can implement vision tasks with minimal coding.

- No separate process variables are required.

- Smart Camera images can be integrated into HMI applications in just a few clicks.

- Camera parameters, lighting and trigger conditions can be modified on the fly.

- Applications are stored on the controller, preserving data even if a camera is replaced.

This tight integration allows multiple machines to be linked without sacrificing stability or inspection quality—an important consideration for scalable smart manufacturing architectures.

Deep Learning and Visual Anomaly Detection

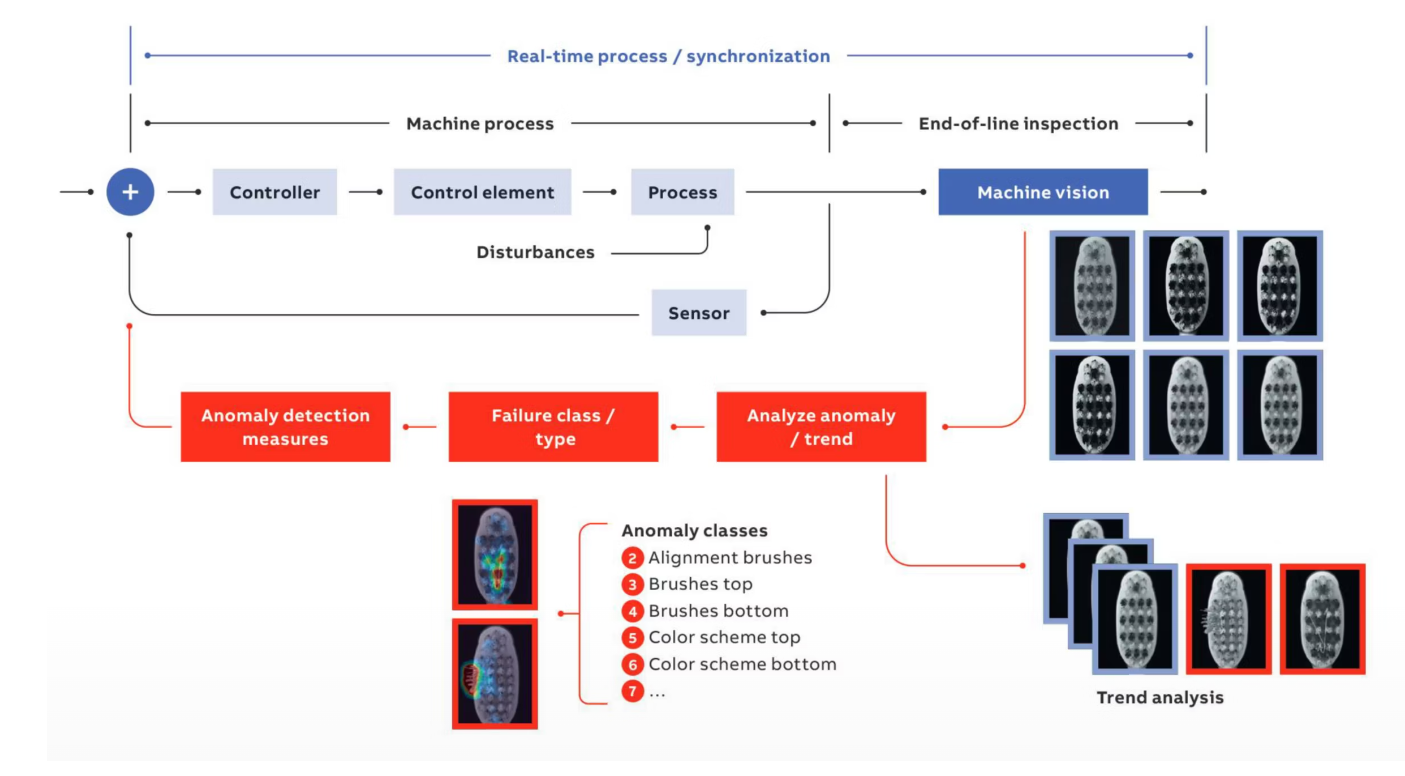

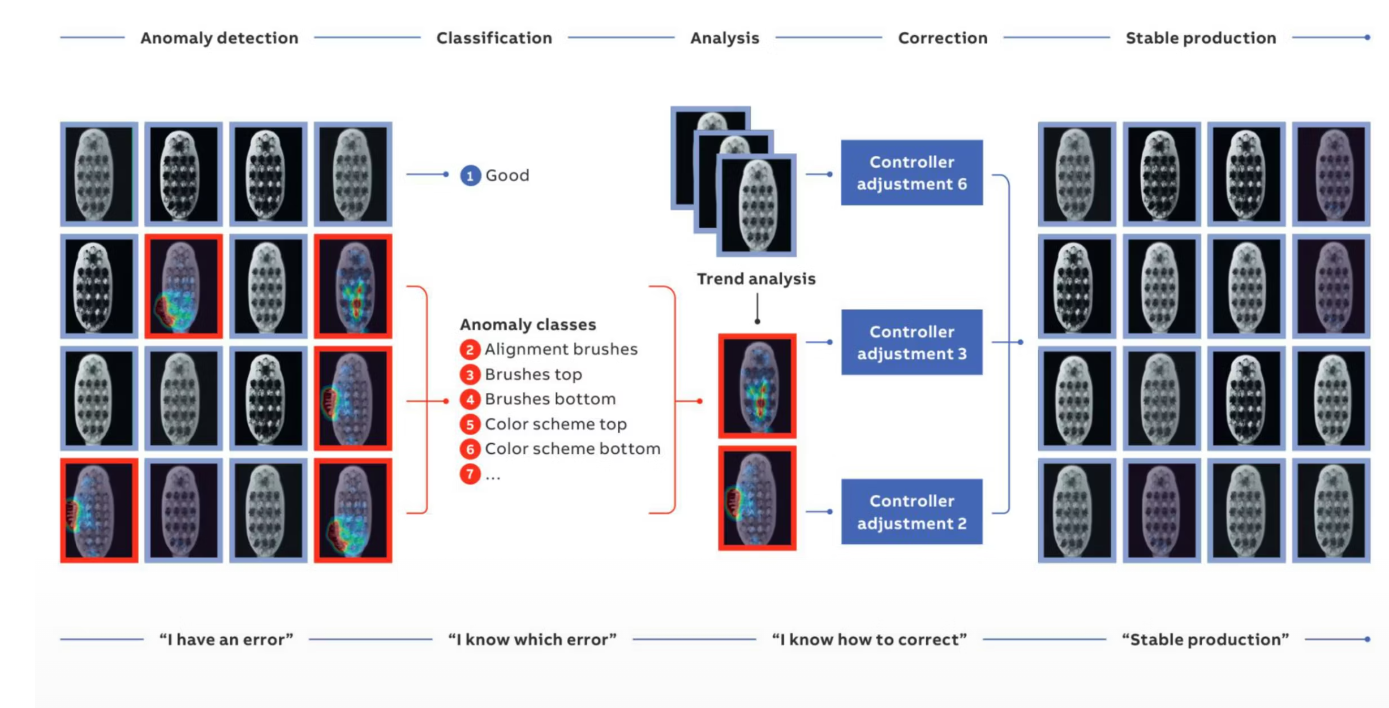

Industrial AI aims to enhance operational efficiency by automating repetitive inspection tasks, reducing human error and enabling real-time, data-driven decision-making. In manufacturing, anomaly detection plays a central role.

Anomalies such as color deviations, scratches, misalignment, missing components or dimensional inconsistencies, are typically rare events relative to standard production output. Detecting them reliably is critical for reducing scrap, minimizing rework and improving quality.

Humans excel at recognizing visual deviations from normal patterns. Machine learning systems, however, have historically struggled due to limited anomaly samples and complex model architectures. Visual Anomaly Detection (VAD) addresses this by training models on images representing “good” production states, allowing rapid detection of deviations.

B&R reports anomaly detection times of approximately 60 ms. When equipped with AI processors from partner companies specializing in intelligent processing units, inference times are significantly reduced compared with conventional approaches.

Using established anomaly detection methodologies from industrial image processing partners, the system typically requires between 30 and 100 images for training, depending on failure complexity. Training is simulated offline using mapp Vision software, and no additional hardware is required.

Application Example: High-Speed Consumer Goods

In fast-moving consumer goods sectors, such as toothbrush manufacturing, production lines operate at extreme synchronization levels. CNC-driven tufting machines precisely embed bristle bundles into rotating, turret-positioned handles.

Integrating AI-based vision into this environment enables real-time fault detection without slowing production. During training, approximately 60 ‘good’ images can be used to teach the system normal production characteristics. The deep-learning model is then parameterized to identify defined failure modes, distinguishing subtle defects under high-speed conditions.

The result is higher productivity, reduced waste and enhanced quality assurance—without compromising throughput.

Implementation Realities

Large numbers of customers across multiple market sectors are currently trialling the system. However, full deployment—including model training and machine learning optimization – can take up to 12 months. Manufacturers are often cautious about publicizing such initiatives during development to protect competitive advantage.

Embedding AI into manufacturing processes presents both technical and organizational challenges. Models must be stable, reproducible and aligned with business objectives. Deep-learning systems can be computationally intensive, and integration into existing automation infrastructure requires careful engineering.

Yet the direction of travel is clear.

New Era for Integrated Metrology and Control

By embedding AI-driven machine vision directly into the automation backbone, ABB and B&R are redefining the role of imaging in industrial systems. Vision is no longer an external quality gate – it is becoming an active control parameter within high-speed production environments.

For the metrology community, this marks a critical evolution: inspection data is transitioning from post-process validation to real-time process optimization. As AI moves decisively into the control loop, machines are no longer flying blind—they are beginning to see, decide and act in one unified system.

For more information: www.abb.com